Decoding Entropy: A Credit Risk Modelling Perspective

By: Tejas Mhaskar

Abstract:

Since its evolution, the concept of Entropy has been applied in various fields like Computer Science, Quantitative Finance, Physics etc. The definition of Entropy has slightly different meanings depending on the field of science to which it is being applied. This paper aims to examine the concept of Entropy and its application in Credit Risk Model Development and Validation.

Non-Technical Introduction

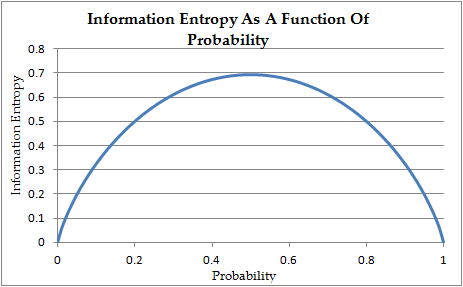

Entropy (Shannon’s Entropy) is a probabilistic measure of uncertainty, whereas, Information is measure of reduction in that Entropy. Let us take an example of a “fair coin” to distinguish between these two terms. Before we flip the coin, we are uncertain about what will happen once it is flipped. After the coin is flipped, the uncertainty about the flip immediately drops to zero since we now know the response i.e. heads or tails. This in turn means that we have gained information.

Now, how does one measure the uncertainty about the response (heads or tails) before the coin is flipped? How does one quantify this uncertainty about the flip? This is where ‘Entropy’ comes into picture.

Continue reading here: Decoding Entropy: A Credit Risk Modelling Perspective